Driving simulation company, rFpro, has developed what it claims is a means to “slash the hardware costs associated with large-scale simulation”. According to the company the technology has the potential to remove the industry’s dependence on manual annotation of test data that is created frame-by-frame, which can be both time-consuming and error-prone.

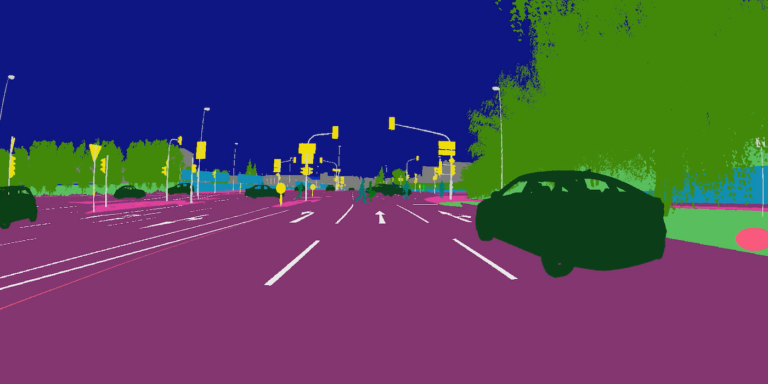

“Currently, many players in the autonomous vehicle (AV) field employ an army of people to manually annotate each frame of a video, LiDAR point or radar return to identify objects in the scene (such as other vehicles, pedestrians, road markings and traffic signals) to create training data,” said Matt Daley, managing director of UK-based rFpro. “This new approach provides a digital, cost-effective way of creating the same data completely error-free and 10,000 times quicker compared to manual annotation, which takes around 30 minutes per frame with a 10% error rate. This step-change will enable deep learning to fulfil its potential because it significantly reduces the cost and time of generating useful training data.”

rFpro calls the new approach Data Farming and compares it to Render Farming, which has revolutionised the economics of popular animation. The company says that Data Farming enables customers to build complete datasets that cover the full vehicle system, where every sensor is simulated at the same time. The data is synchronised across all sensors, which is essential when customers are employing sensor fusion to bring together data, for example from multiple 8K HDR stereo cameras, LiDAR and radar sensors at the same time.

Data Farming is already being utilised by existing rFpro customers, including global Tier 1 supplier, Denso ADAS Engineering Services. Francisco Eslava-Medina, a project manager at Denso ADAS stated, “Through Data Farming we can create an extensive number of driving scenarios, allowing the generation of very large variations in scenes, all through the investment in a single platform. This allows us to quickly and cost-effectively generate the vast quantity of quality training data that is essential for certain product development phases of computer vision technologies, especially for neural networks for our autonomous vehicle technologies.”

rFpro says the approach permits customers to start with even a single PC, to perform a complex simulation involving multiple sensors. “Simulations don’t have to be run in real-time, offering flexibility to the user around the computing power required,” added Daley. “For engineers, this puts it within a typical departmental budget, rather than requiring senior approval. High-quality training and test data is now far more accessible.”

Data Farming is fully scalable, allowing customers to expand across multiple hardware resources when they are ready to accelerate their data production.

Another customer that has successfully employed Data Farming is Ambarella, an autonomous vehicle technology provider. “The software presents a radical shift in creating training data and is already accelerating the development of our autonomous vehicle systems,” said Alberto Broggi, general manager of Ambarella’s division in Italy. “Deep learning and AI are critical to the successful adoption of autonomous vehicles. It may not be reasonably possible to get to the standard required only through the use of manually annotated data sets. Data Farming will transform the way the industry develops autonomous vehicles”.